Vaisakh Shaj

Postdoctoral Researcher at the University Of Edinburgh, UK

Room 234 , Informatics Forum

Edinburgh, Scotland

United Kingdom EH89AB

Welcome to my personal website. I am a Postdoctoral Researcher in AI and Machine Learning at the University Of Edinburgh working with Prof Amos Storkey. I am affiliated with the Institute Of Adaptive and Neural Computation, School Of Informatics.

I did my PhD in Machine Learning For Control under Prof Gerhard Neumann at the ALR Lab, Karlsruhe Institute Of Technology. Before that I was a Data Scientist at the cybersecurity firm McAfee (Intel Security). Previously I worked with Intel for 2 years. I hold a post graduate degree in Machine Learning and Computing from the Indian Institute of Space Science and Technology.

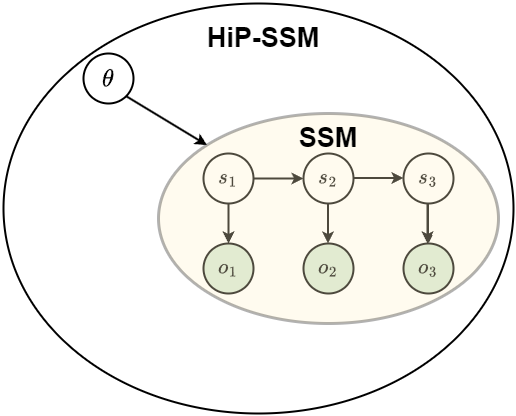

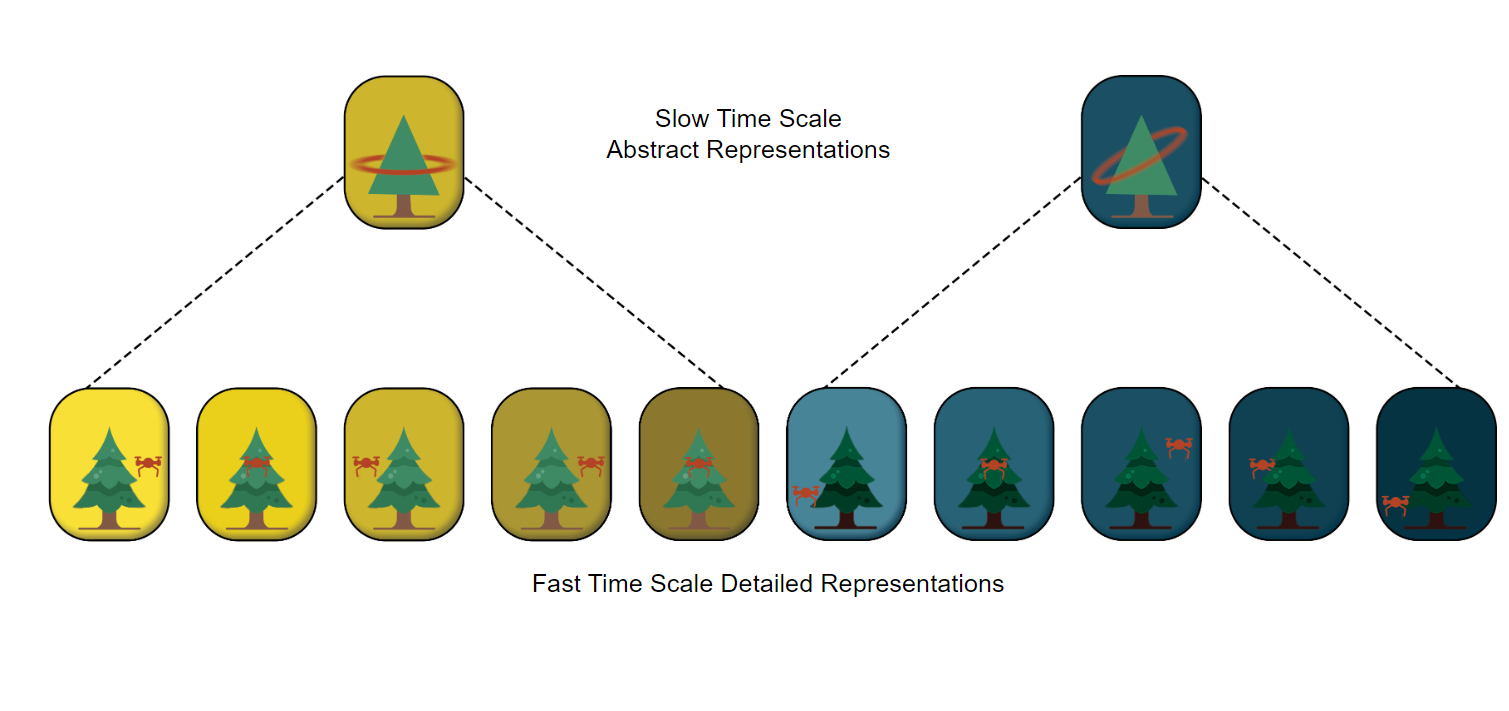

My doctoral thesis focussed on building “World models With Hierarchical Temporal Abstractions” based on probabilistic and Bayesian principles.

Research

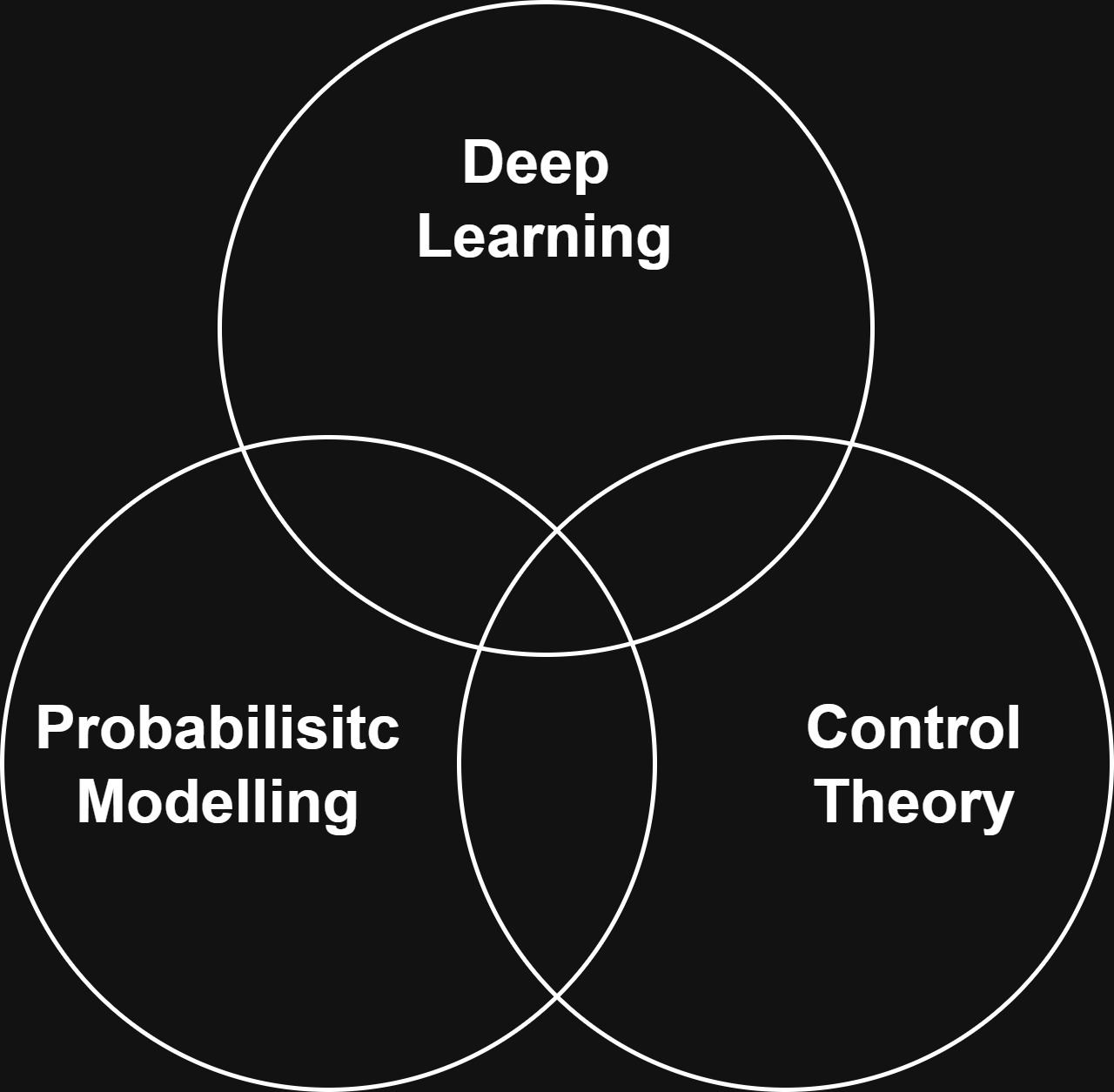

My research interests and expertise lie at the intersection of Deep Learning, Probabilistic Modelling, and Control Theory. My current goal is to build scalable neural network models grounded in probabilistic and control theoretic principles. Currently, my application focus is on building efficient, controllable, and safe large language models (LLMs) and intelligent agents.

Other research directions that I have explored and am still curious about include Hierarchical Modelling, Long Horizon Reasoning/Control, and Learning under Non-Stationarity (Meta/Continual Learning etc).

🌈Diversity and Inclusion Statement: I care deeply about making work places more diverse and the inclusion of women, LGBTQ+ and under-represented minorities in AI research.

news

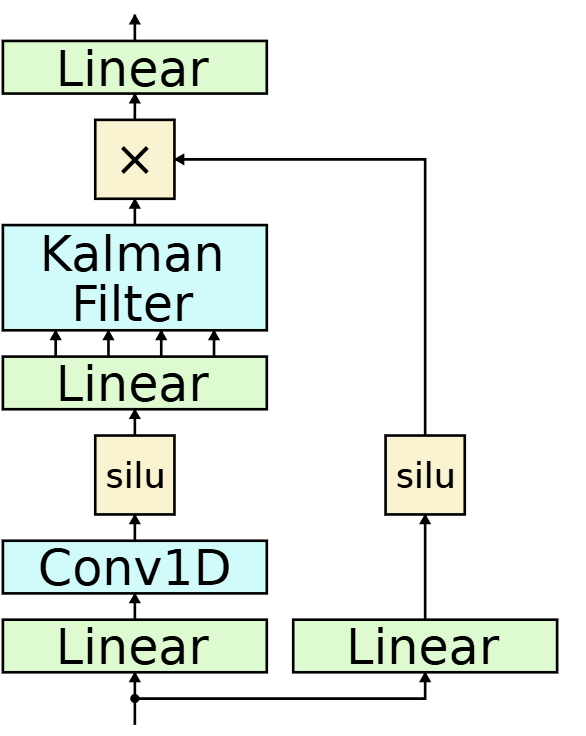

| Feb 11, 2026 | New preprint on Parallel Bayesian Filters For Efficient Language Modelling is out! Also accepted at the 1st Workshop on Epistemic Intelligence in Machine Learning (EIML) at EurIPS 2025. |

|---|---|

| Oct 11, 2024 | Our new paper on Adaptive World Models and Non-Stationary RL accepted at NeurIPS 2024 Adaptive Foundation Models Workshop. |

| Dec 9, 2023 | Attending NeurIPS 2023 in New Orleans, US. |

| Nov 15, 2023 | Gave a talk on “Multi Time Scale World Models” at MILA Robot Learning Seminar. Video Link |

| Sep 21, 2023 | PhD work “Multi Time Scale World Models” accepted in NeurIPS 2023 as a Spotlight (Top 3% of all submitted papers). |

latest posts

selected publications

2026

2024

-

Learning World Models With Hierarchical Temporal Abstractions: A Probabilistic PerspectivePhD Thesis preprint arXiv:2404.16078, 2024

Learning World Models With Hierarchical Temporal Abstractions: A Probabilistic PerspectivePhD Thesis preprint arXiv:2404.16078, 2024

2023

2021

2020

-

Action-Conditional Recurrent Kalman Networks For Forward and Inverse Dynamics LearningIn Conference on Robot Learning, 2020

Action-Conditional Recurrent Kalman Networks For Forward and Inverse Dynamics LearningIn Conference on Robot Learning, 2020

2019

-

Zero-shot knowledge distillation in deep networks (ICML 2019)In International Conference on Machine Learning, 2019

Zero-shot knowledge distillation in deep networks (ICML 2019)In International Conference on Machine Learning, 2019